Teams building large language models for global reach quickly hit the same wall: you cannot simply buy the conversational datasets needed for languages spoken by millions in Africa and Southeast Asia. Off-the-shelf corpora cover English, Mandarin, Spanish, and a few others reasonably well. Everything else sits in the long tail—under-documented dialects, regional accents, and everyday spoken patterns that existing web scrapes and commercial suppliers never captured in usable volume.

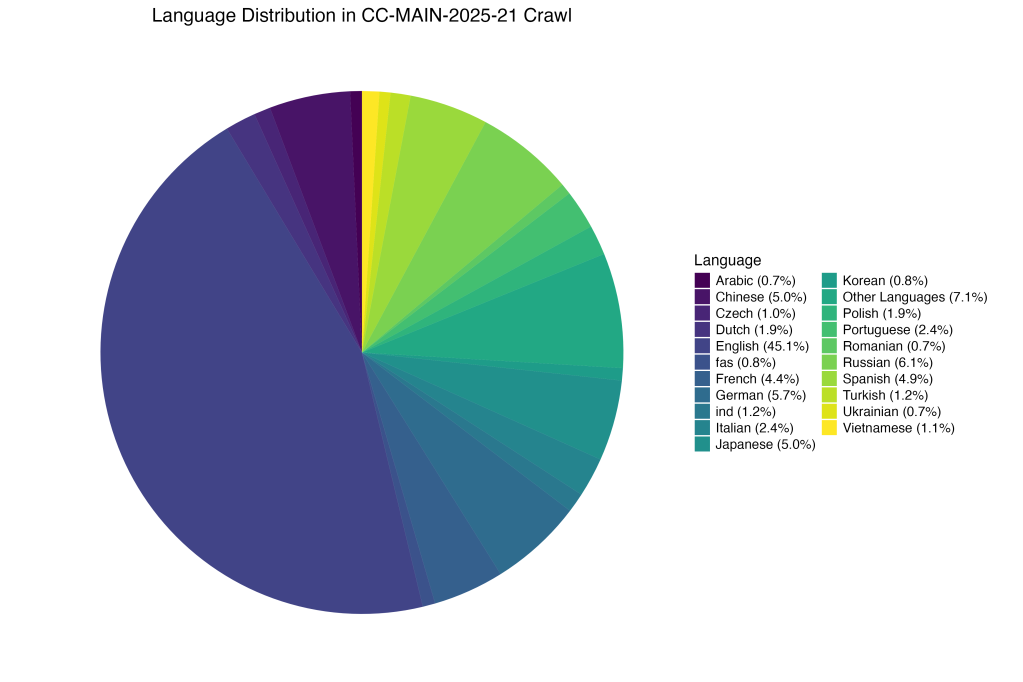

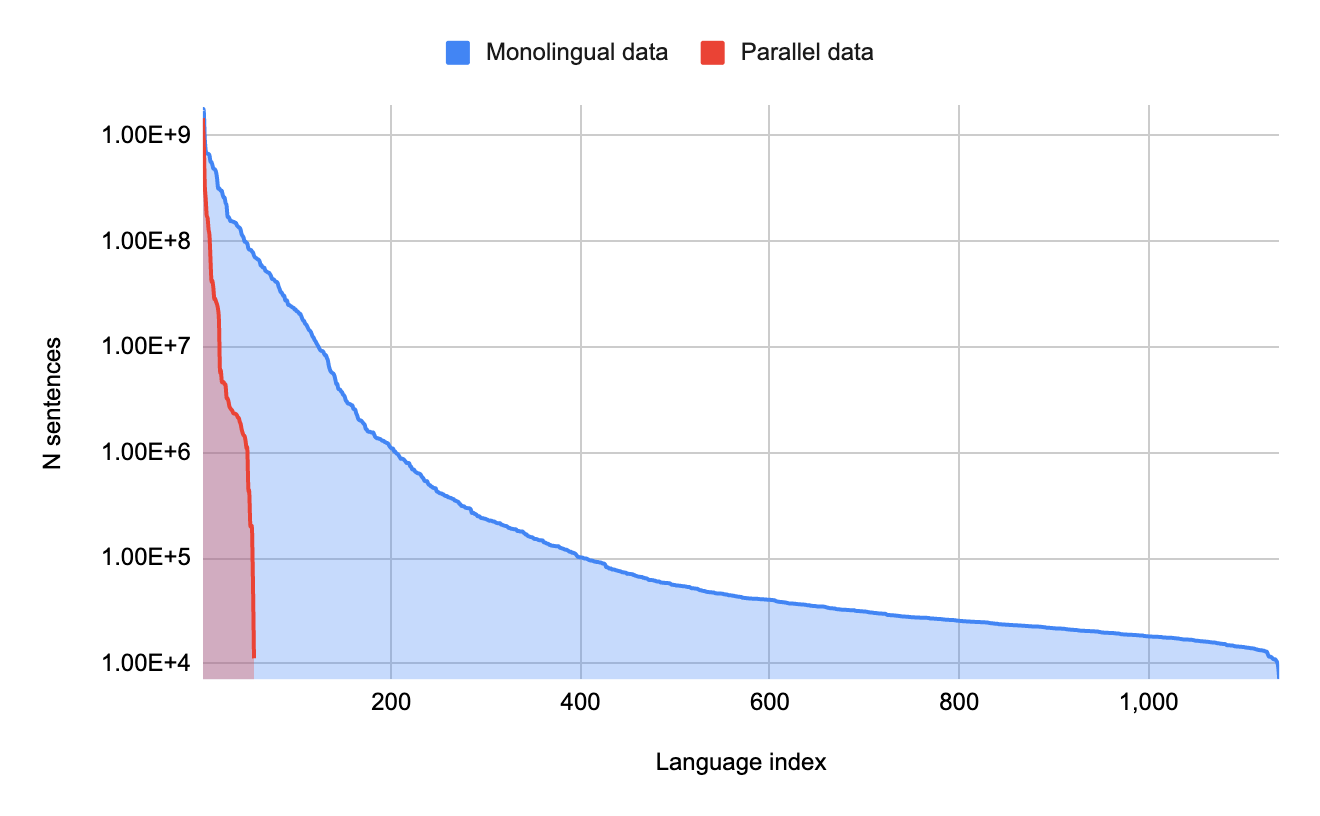

This gap is not theoretical. Recent breakdowns of training data sources show English alone accounting for roughly 45% of major web crawls, with the next tier of high-resource languages filling most of the rest. Africa, despite more than 2,000 living languages, contributes only about 2% of the data feeding today’s frontier models. Southeast Asia’s 1,300 indigenous languages fare little better; representation in common pre-training resources hovers in the low single digits for most of the region.

The practical result is easy to predict. Models trained on these skewed distributions stumble on real-world speech from Dakar, Jakarta, or rural Vietnam. They misrecognize tonal shifts, miss local idioms, and produce responses that feel culturally off. Users notice immediately, and adoption stalls.

The fix that actually scales is targeted crowdsourcing of open-ended dialogues from native speakers of specific dialects. Instead of hoping someone has already recorded the right conversations, you recruit the people who live them every day.

Platforms built for research allow precise screening: mother-tongue speakers of Wolof (Senegal), Igbo variants (Nigeria), Javanese regional forms (Indonesia), or Tamil dialects common in Malaysian communities. Participants receive clear tasks—“talk naturally for 10–15 minutes about your daily routine, family life, or local markets”—and record via simple mobile apps or browser interfaces. The output is spontaneous, rich in prosody, code-switching, and background noise typical of real use cases. Quality control comes from native validators who flag unclear segments and confirm cultural accuracy.

Studies on low-resource automatic speech recognition confirm what practitioners see on the ground. Increasing speaker count and accent coverage in training data measurably improves robustness. One analysis of zero-shot accent performance found that moving from a single speaker to 15 speakers cut character error rates from 19.8% to 15.6% with the same total audio hours. Adding genuine dialect variety further tightens the gap on unseen test accents. Models without this diversity simply do not generalize; those built on it handle the messy reality of global users far more gracefully.

Compliance is non-negotiable and entirely manageable when built in from the start. Every contributor receives plain-language consent forms in their own language, understands exactly how their recordings will be used and stored, and retains the right to withdraw data. Compensation follows local living-wage standards—often higher than typical microtask rates—to attract serious participants rather than rushed ones. Anonymization strips identifiers, storage respects regional data-protection rules (equivalent to GDPR where applicable), and audit trails document every step. Platforms such as Prolific or custom community pipelines let teams apply these safeguards without reinventing the wheel.

Efficiency comes from focus. Scripted prompts waste time on unnatural phrasing; open dialogues deliver usable volume quickly because people already know how to talk. A well-run campaign can gather dozens of hours per target dialect per week once recruitment is dialed in. Native-led quality gates keep rejection rates low and data value high. The same infrastructure that collects raw audio also supports downstream annotation—transcription, intent labeling, or toxicity tagging—turning raw speech into training-ready dialogue corpora.

The payoff extends beyond technical metrics. Models trained on these long-tail datasets perform better in emerging markets, open new user bases, and reduce the risk of cultural blind spots that can damage brand trust. Companies that invest here gain a genuine competitive edge while helping close the digital language divide.

When organizations need to move from pilot collections to production-scale multilingual dialogue corpora, the difference lies in execution partners who already understand the terrain. Artlangs Translation brings exactly that depth—proficiency across more than 230 languages, built through years of specialized work in translation services, video localization, short-drama subtitle localization, game localization, multi-language dubbing for short dramas and audiobooks, and large-scale multi-language data annotation and transcription. Their track record of delivered projects shows what consistent quality and cultural fluency look like at volume. For teams serious about cracking the data scarcity problem for multilingual large models, that kind of focused experience turns the long tail into a genuine strength.