Anyone who relies on navigation apps has experienced it: the calm voice directing you straight into a dead-end alley or the wrong direction on a one-way street. These frustrating moments—and far more dangerous ones for autonomous vehicles—stem from inaccuracies in the underlying map data.

High-definition (HD) maps fix this by building a living digital twin of the real world, accurate to centimeters rather than meters. Unlike ordinary maps that show roads as simple lines, HD maps layer precise geometry, semantics, and attributes so vehicles know exactly where lanes end, which light controls their path, and which nearby landmarks confirm their position. The payoff is fewer wrong turns for drivers and safer autonomy overall.

At the heart of every reliable HD map is a sophisticated data annotation pipeline. Every lane line, traffic sign, traffic light, and point of interest (POI) must be carefully labeled from raw sensor feeds. This article explores the pipeline, focusing on the challenges of updating POI information and annotating lane lines, signs, and lights in complex 2D/3D fused views. Better annotation directly solves the user pain of maps sending cars into dead-ends or against traffic.

What HD Maps Really Are—and Why Precision Matters

An HD map contains four main layers: a dense 3D geometric base from LiDAR point clouds, semantic classifications for every element, POI landmarks for localization, and dynamic updates for construction or closures.

This delivers 10–20 cm accuracy—enough for a vehicle at highway speed to stay perfectly centered without constant corrections. Standard maps manage only 5–10 meters.

The numbers show the urgency. According to Fortune Business Insights, the global HD map market for autonomous vehicles reached USD 3.06 billion in 2025 and is forecast to hit USD 24.64 billion by 2034, growing at a CAGR of roughly 26%. Billions are flowing into annotation infrastructure because even small labeling errors create real safety and routing problems.

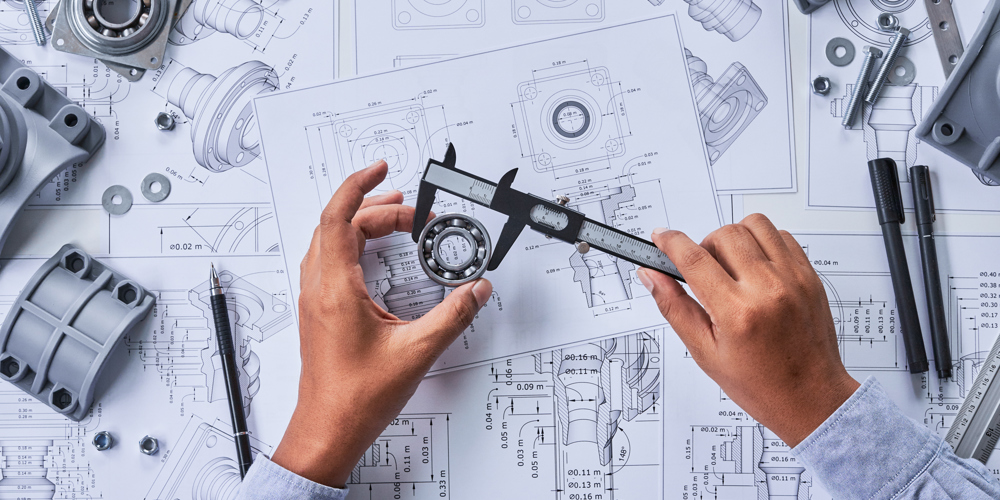

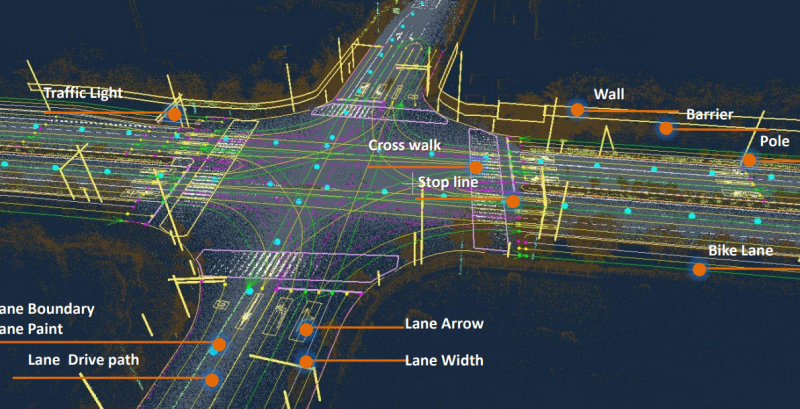

Figure 1: A fully annotated HD map intersection reveals the layered detail—lanes, lights, crosswalks, and POIs—that only meticulous annotation can deliver.

The End-to-End Annotation Pipeline

Mapping fleets equipped with LiDAR, 360° cameras, and high-precision GNSS drive target roads repeatedly. Raw data is calibrated, stitched via SLAM, and fused. AI models then propose initial labels.

Human teams take over in a structured workflow: segment ground elements in bird’s-eye-view projections, label vertical objects in 3D, add attributes from RGB images, convert everything to clean vectors, and validate against ground truth. Leading platforms like Tencent’s THMA automate 3D point extraction for poles and lights while handling lane segmentation, cutting manual effort yet still requiring expert review.

The loop continues after launch—production vehicles report discrepancies, triggering targeted re-annotation. This closed feedback keeps the digital twin current.

POI Information: Frequent Updates, High Stakes

POIs do double duty. For drivers they mark gas stations, shops, and parking. For autonomous systems they serve as visual anchors that correct GPS drift in tunnels or urban canyons.

Urban POI data changes fast—up to 20% of businesses open, close, or relocate each year in busy cities. A 2021 study found over 20% of popular POI datasets had location errors exceeding 50 meters. In navigation terms, that difference sends cars into the wrong service road or a residential dead-end.

Update pipelines combine satellite imagery, filtered crowdsourced reports, vehicle camera telemetry, and occasional on-site checks. Annotators place precise 3D pins and attach rich attributes: entrance coordinates, opening hours, accessibility notes, and temporary flags for scaffolding.

Multi-stage review—automated pre-checks, junior labeling, senior audit, and random sampling—pushes completeness above 95%. When POI layers stay fresh, navigation failures drop sharply.

Figure 2: Everyday POI pins users see on maps rest on precise 3D coordinates and semantic attributes extracted through careful annotation.

Lane Lines: Bridging 2D Looks and 3D Reality

Lane markings appear simple, yet annotating them accurately is one of the toughest jobs.

Camera images must handle fading paint, shadows, rain reflections, and glare. LiDAR adds elevation and intensity data that reveal subtle ridges even when color is invisible. Workflows project point clouds into bird’s-eye view, run multi-channel segmentation, then lift results back into 3D polylines.

Difficult spots include intersections where lines cross, curved ramps with perspective warp, construction zones with temporary markings, and heavy occlusions from parked cars. Transformer models like MapTR now capture full lane topology instead of isolated lines, improving results in dense cities.

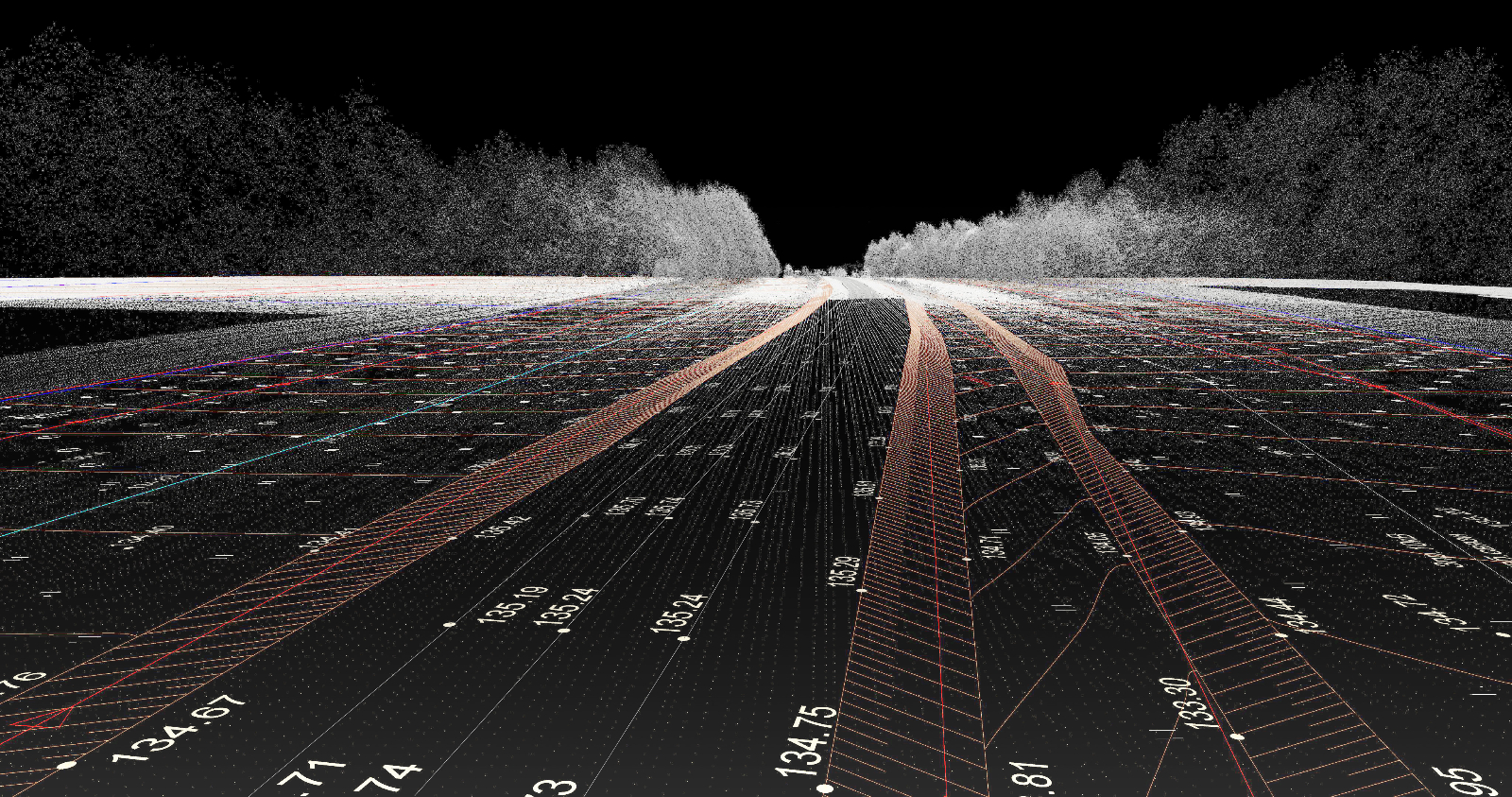

Target accuracy is strict: lateral error below 10 cm, or an autonomous vehicle risks drifting across boundaries during merges. Validation measures deviation every meter against manual drive logs. Studies on mobile LiDAR report around 88% successful recovery, with remaining failures needing human fixes.

Figure 3: LiDAR point cloud of a highway clearly shows extracted lane lines rising above the road—3D data reveals geometry cameras alone miss.

Traffic Signs and Lights: Small Targets, Major Impact

Signs and lights are small in the frame yet must be localized to sub-10 cm precision because vehicles need to know exactly which light governs their lane.

Annotation flow: detect in 2D, label attributes (shape, arrows, bulb count), fuse with LiDAR for 3D position, and ensure consistency across frames. Camera excels at color and pictograms; LiDAR handles thin poles and low light. Combined fusion reaches 95% detection up to 150 meters on benchmark datasets like DriveU.

Challenges peak in urban canyons with overlapping signs, highway gantries carrying dozens of panels, or bad weather that cuts contrast and point density. Annotators capture static mounting details while ignoring momentary light colors.

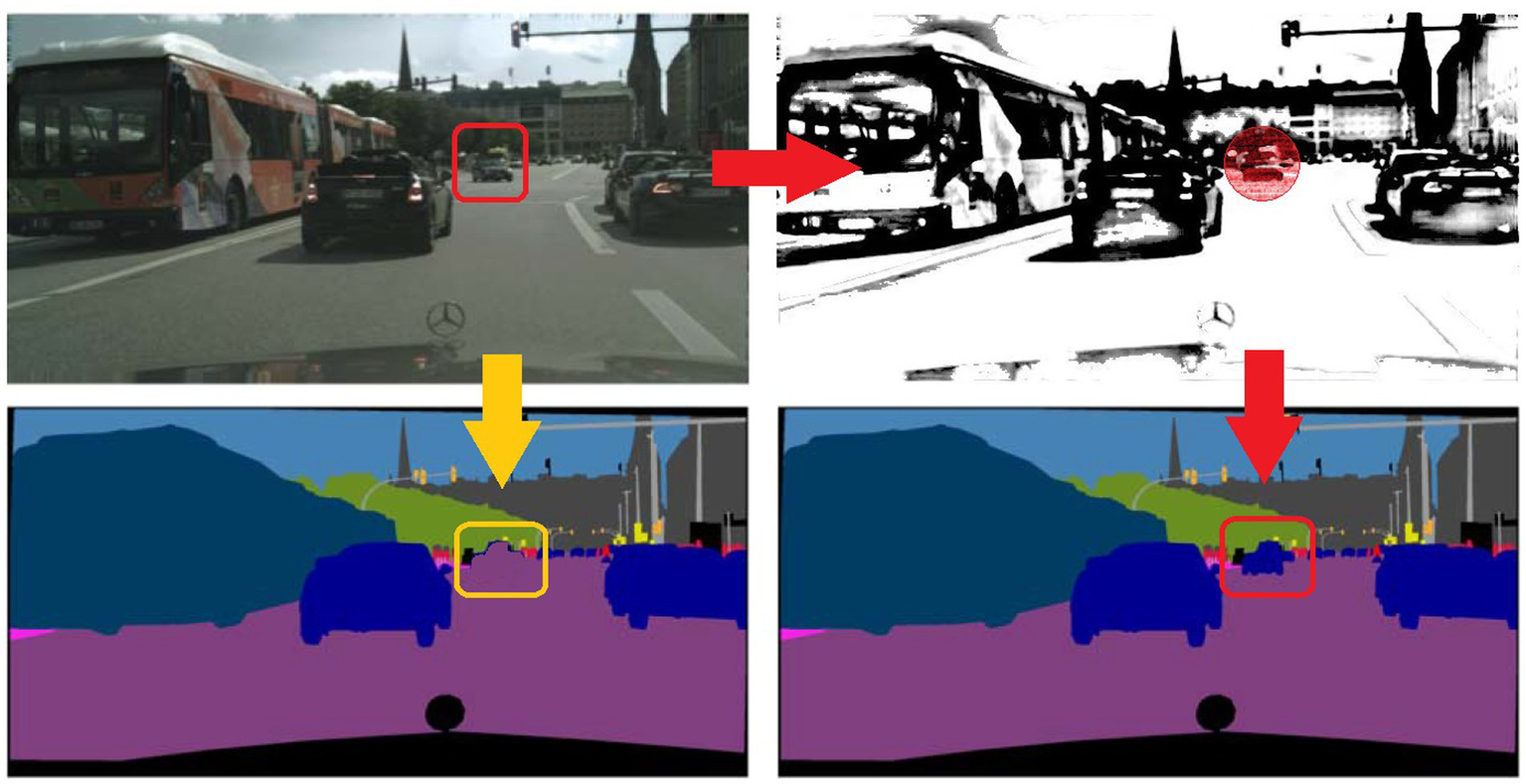

Figure 4: Semantic segmentation in complex traffic scenes highlights typical errors (red boxes). Solid 2D/3D fusion annotation prevents these from reaching production maps.

Quality Control and Human Expertise

Even advanced AI needs human oversight. Active-learning systems deliver 40% faster throughput while keeping critical IoU scores above 0.92. Tiered reviews catch subtle issues models miss, such as faded symbols under leaves or region-specific sign variations.

Scaling to national coverage demands petabytes of data and thousands of annotation hours, making secure cloud platforms essential—especially for different traffic standards across continents.

Real-World Results and the Road Ahead

High-quality annotation delivers measurable gains: fleet operators see 30–50% fewer route corrections, smoother merges, and confident intersection behavior. Drivers notice quieter trips with fewer “recalculating” moments and greater trust in the map.

The next leap is fully dynamic maps refreshed in seconds via massive vehicle fleets and edge AI. Yet even the smartest models will still rely on human judgment for edge cases and regulatory needs.

As HD mapping expands globally, annotation must handle diverse languages, sign conventions, and POI categories. Partners experienced in large-scale multilingual data work become critical.

In delivering precise, culturally aware annotated datasets for international projects, Artlangs Translation has built a strong reputation. Proficient across more than 230 languages and with years of specialized experience in translation services, video localization, short-drama subtitle localization, game localization, multi-language dubbing for short dramas and audiobooks, plus extensive multilingual data annotation and transcription, Artlangs supports numerous successful global initiatives with proven quality and efficiency.

The digital twin of our roads is only as reliable as the annotation behind it. Every accurate lane line, sign, light, and POI reduces wrong turns, builds driver confidence, and paves the way for safer autonomous mobility worldwide.