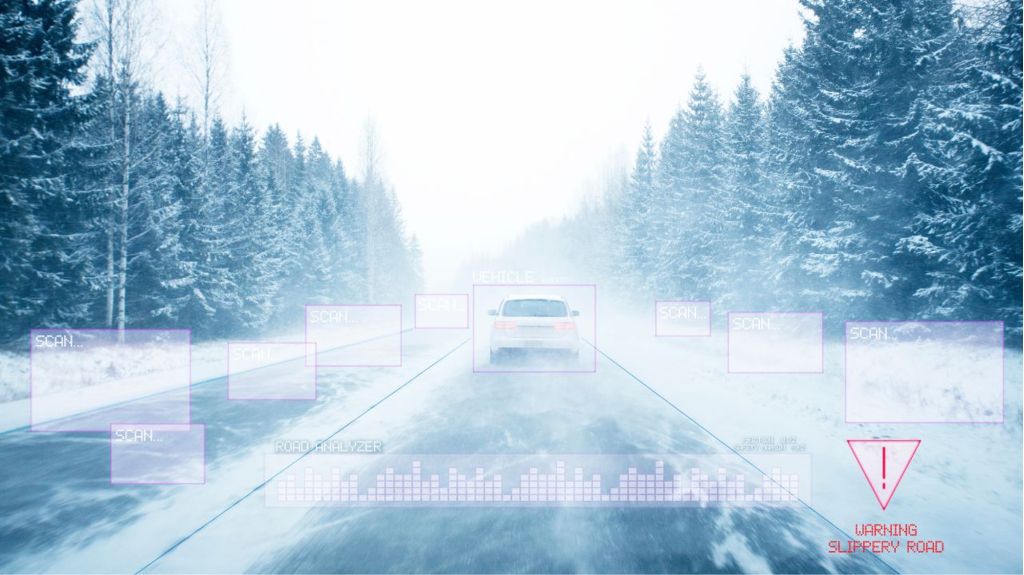

A delivery tricycle darts across a crowded intersection in heavy rain. To a human driver, the odd shape and sudden path are obvious warnings. To many autonomous systems today, that same object can blur into background noise or vanish entirely—leaving the vehicle seconds from disaster. This isn’t a rare edge case. It’s the daily reality at complex urban crossings where rain, snow, or mist turns clean sensor data into a messy cloud of false echoes and missing points.

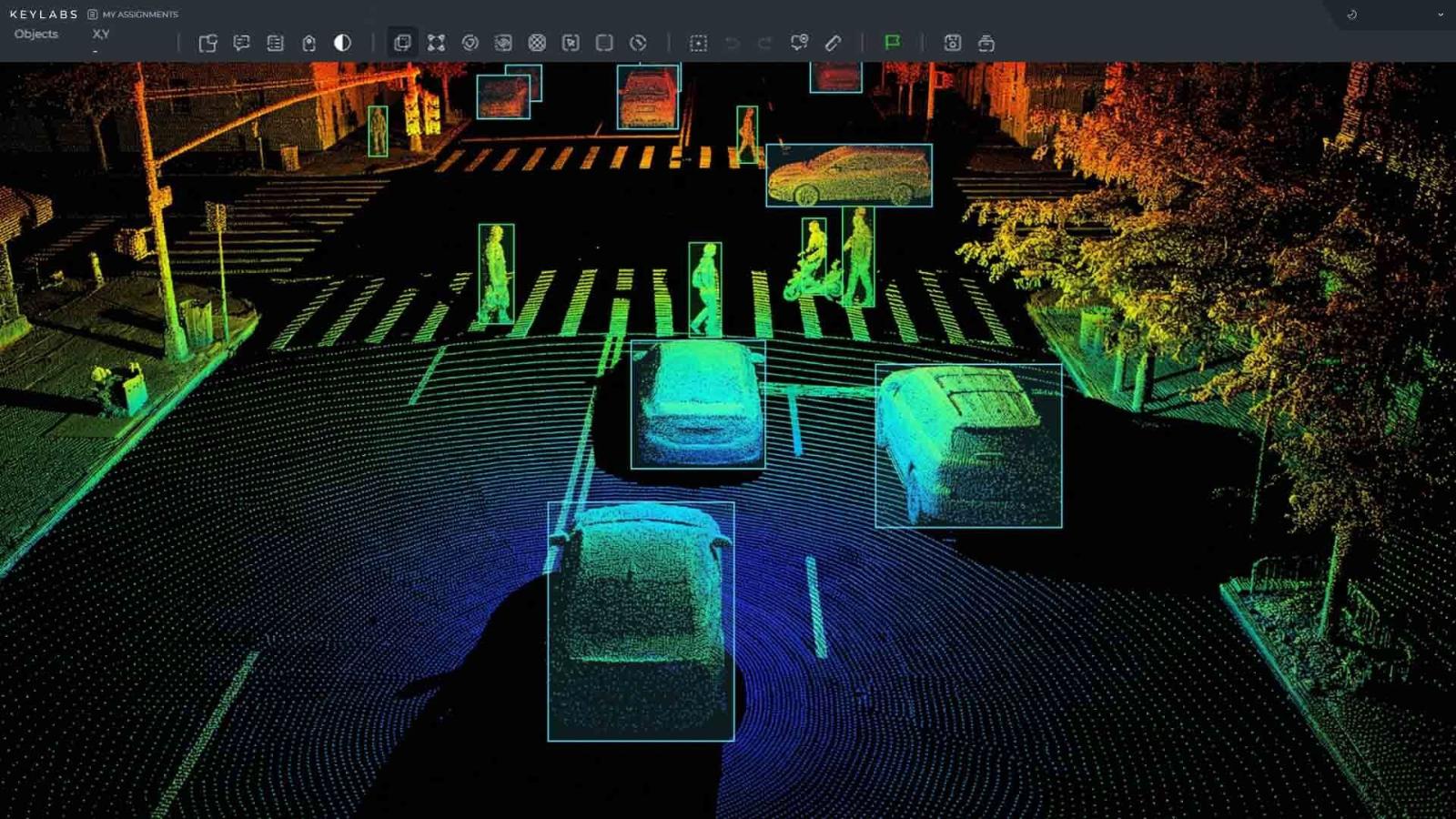

Engineers working on self-driving technology know the stakes. LiDAR remains the gold standard for building rich 3D maps of the world, firing millions of laser pulses per second to create dense point clouds. But a single frame tells only part of the story. Real motion—whether a pedestrian stepping off the curb or a tricycle swerving to avoid a pothole—only reveals itself across dozens of continuous frames. That’s why accurate annotation and tracking of these sequences have become the make-or-break capability for Level 4 and Level 5 autonomy.

The challenge starts with the raw data itself. In clear weather, a LiDAR point cloud might show crisp outlines of cars, bikes, and people. Add rain or snow and everything changes. Water droplets and snowflakes scatter the laser beams, injecting thousands of “ghost” points while weakening returns from real objects. A 2025 analysis of Cylinder3D segmentation models showed accuracy dropping more than 10 percentage points in adverse conditions compared with clean datasets. Real-road tests in Japan confirmed the pattern: detection range and precision fell steadily as rainfall rose from 10 mm/h to 40 mm/h, with thick fog cutting performance even further.

Cleaning this “dirty” data demands more than simple filters. Leading teams now combine multi-frame temporal consistency checks with physics-based noise removal. Points that appear only in one frame and lack velocity correlation are flagged as likely rain or snow. Motion compensation then aligns successive clouds so a moving tricycle’s points stay grouped together even as the ego vehicle turns or accelerates. The result is cleaner trajectories that let algorithms predict where that tricycle will be three seconds from now—not just where it was.

Annotation itself is the hidden backbone. Every object in every frame needs a precise 3D bounding box, class label (pedestrian, bicycle, tricycle, car), and unique tracking ID that persists across hundreds of frames. Manual labeling of a single dense scene can take hours; doing it consistently for millions of frames is impossible without smart tools. Modern pipelines use semi-automated propagation: an annotator draws one tight box on the first clear frame, then a single-object tracker follows the target through occlusions and weather noise, with human reviewers stepping in only where confidence dips. Multi-object trackers scan entire scenes at once, catching interactions like a pedestrian dodging between two tricycles.

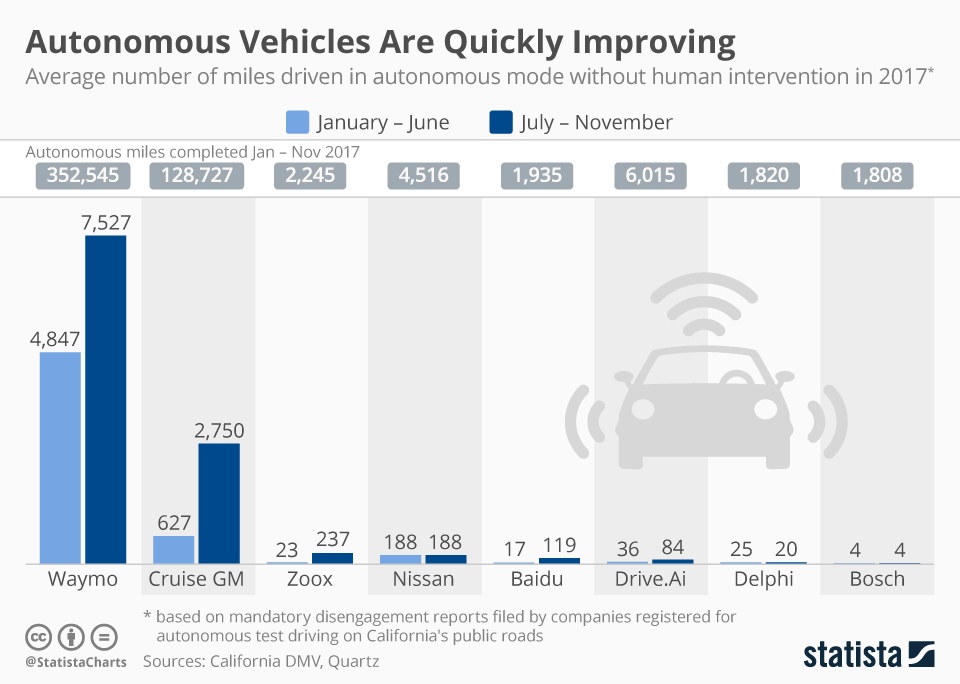

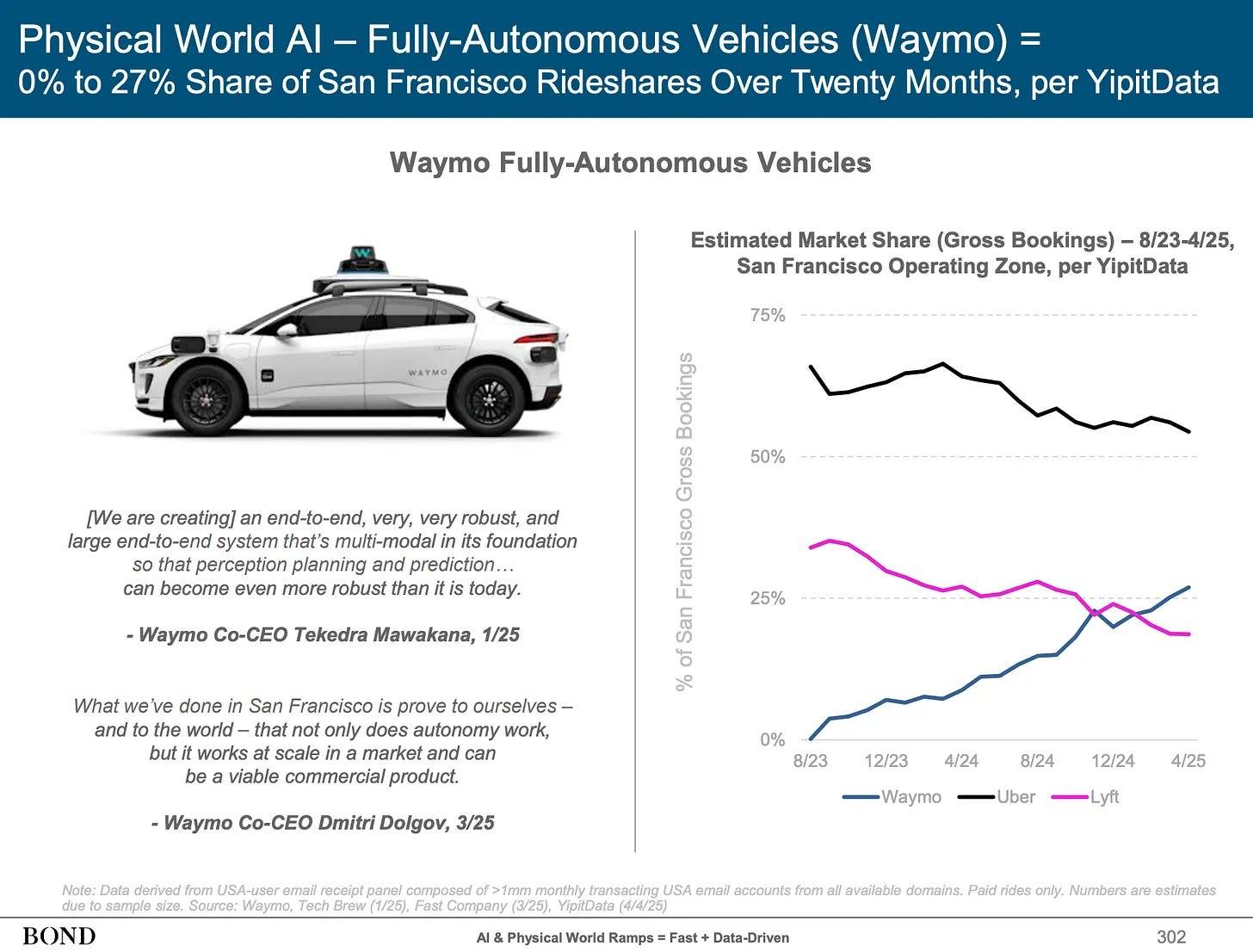

The payoff shows up in safety numbers. Waymo’s rider-only miles logged through 2025 delivered 90 % fewer serious-injury crashes and 82 % fewer airbag deployments than human-driven benchmarks in the same cities and routes. Those gains didn’t come from better hardware alone. They came from training on meticulously annotated continuous-frame data that taught the system to recognize irregular shapes even when partially obscured by rain.

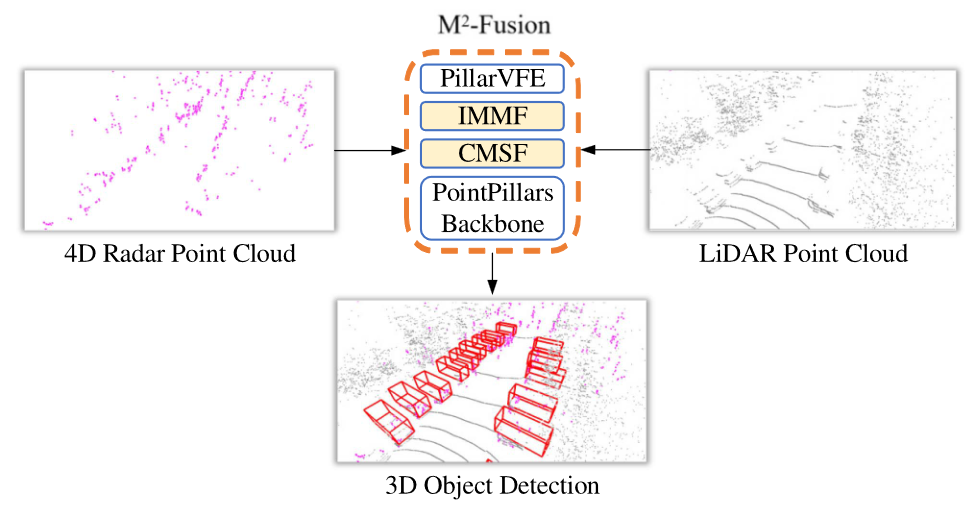

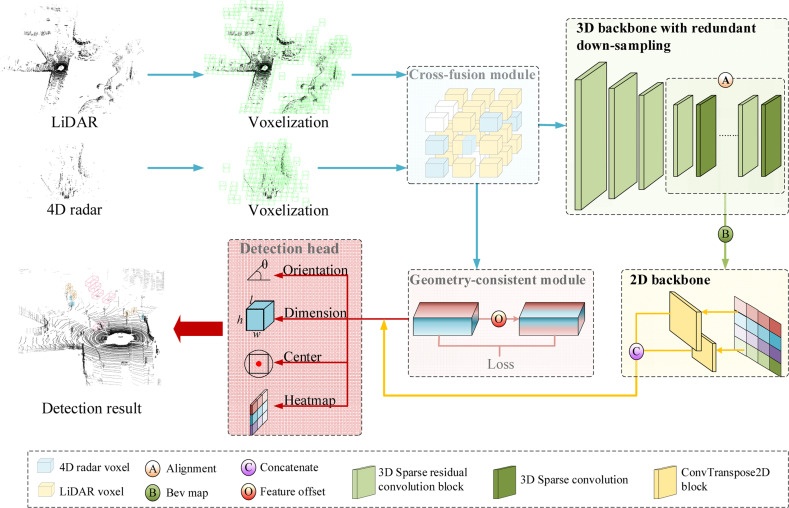

Fusion with 4D millimeter-wave radar pushes robustness further. Unlike traditional 3D radar, 4D versions add precise elevation and dense point clouds of their own, performing reliably when LiDAR struggles. By feeding both sensor streams into shared neural backbones—often with motion-aware alignment modules—systems maintain tracking of a tricycle even when LiDAR returns are sparse. Recent fusion architectures like MoRAL demonstrate exactly this: radar provides velocity anchors while LiDAR supplies shape detail, and the combined model handles rain, fog, and urban clutter better than either sensor alone.

The human cost of getting this wrong is real. NHTSA data through late 2025 recorded over 5,200 incidents involving automated systems, many traced to perception failures in low-visibility or complex traffic. Failures to classify non-standard vehicles—tricycles, e-scooters, delivery carts—appear repeatedly in urban test logs. Better continuous-frame annotation directly attacks that gap by teaching models the full range of real-world motion patterns, not just textbook car and pedestrian silhouettes.

Scaling these capabilities globally brings another layer: datasets must work across continents, languages, and traffic cultures. A tricycle in Singapore looks and moves differently from one in rural India or a European cargo bike. Training data therefore needs not only technical precision but also linguistic and cultural localization so that labels, scenario descriptions, and edge-case annotations translate accurately for development teams worldwide.

That’s where deep domain expertise in both technology and language becomes indispensable. Artlangs Translation masters translation in over 230 languages and has spent years focused on translation services, video localization, short drama subtitle localization, game localization, short dramas and audiobooks multi-language dubbing, plus multi-language data annotation and transcription. Their portfolio of successful projects shows how high-quality, culturally attuned labeling turns raw sensor streams into globally reliable training sets—helping autonomous systems recognize the same tricycle, whether the engineer reviewing the data is in Beijing, Stuttgart, or Silicon Valley.

The road ahead is still long, but the direction is clear. When LiDAR point clouds are annotated with care across continuous frames, cleaned of weather noise, fused intelligently with 4D radar, and localized for every market, cars stop merely detecting objects and start truly understanding the living, unpredictable world around them. The tricycle at the rainy intersection stops being a blind spot and becomes just another predictable participant in safe, shared mobility.