Large language models promise to break down barriers in global business, customer service, and innovation. Yet most still stumble when asked to handle anything beyond a handful of dominant languages. The root cause is straightforward: training data that overwhelmingly favors English and a few high-resource languages, leaving models biased, inaccurate, and culturally tone-deaf in the markets that matter most.

Consider the hard numbers. In GPT-3, English made up more than 92% of training tokens, with everything else sharing the remaining sliver. LLaMA-2 showed a similar skew at roughly 89.7% English. Even after filtering massive web crawls like Common Crawl (which itself is only about 45% English at the raw webpage level), the cleaned datasets that actually reach model training remain heavily tilted. The result? Performance gaps that widen dramatically as you move away from English, French, German, or Chinese.

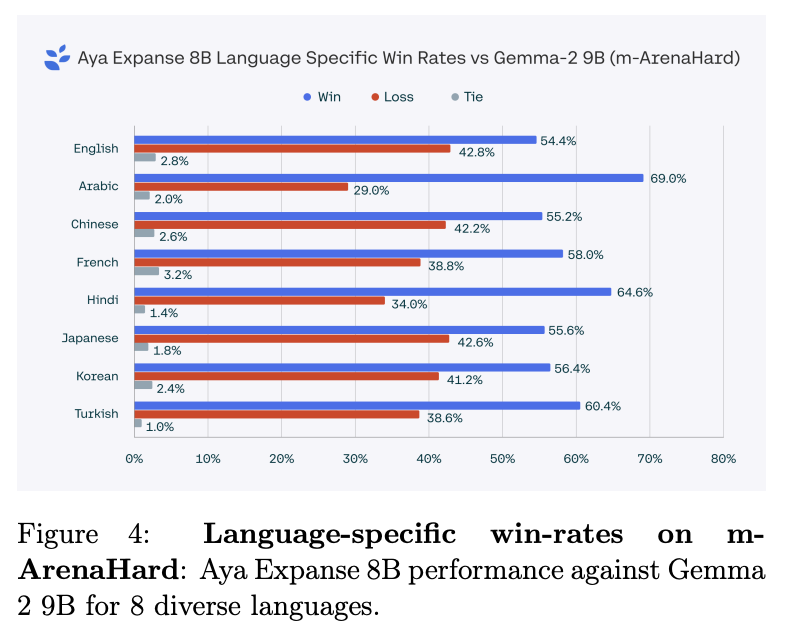

This chart of language-specific win rates on a multilingual benchmark (Aya Expanse 8B vs. Gemma-2 9B) illustrates the pattern clearly: English sits comfortably ahead, while Arabic, Hindi, Japanese, and others show inconsistent or lower results. For low-resource languages—think Kannada, Odia, or countless others spoken by millions—the drop-off is even steeper. Models trained on scarce or low-quality native data simply cannot reason, translate, or generate with the same fluency or cultural awareness.

The business impact is real. An e-commerce platform expanding into Southeast Asia watches its chatbot confuse local idioms and lose customers. A global enterprise rolls out an internal knowledge tool that performs brilliantly for headquarters but frustrates regional teams. Healthcare AI that misinterprets symptoms described in Spanish or Arabic. These aren’t edge cases; they’re daily realities when training data doesn’t reflect the world.

Where does the data actually come from—and why is most of it insufficient?

The backbone for nearly every major LLM starts with web scrapes (Common Crawl being the giant), Wikipedia dumps, books, scientific papers, code repositories, and social media archives. These sources deliver scale—trillions of tokens—but they amplify existing imbalances. English-language websites dominate high-quality, crawlable content. Translations of English material often sneak into “multilingual” slices, introducing second-hand phrasing that lacks native rhythm and nuance.

Parallel corpora (sentence pairs across languages) help with alignment, but high-quality ones are rare outside a dozen language pairs. User-generated content from forums or reviews adds diversity yet brings noise, slang drift, and toxicity. Synthetic data generated by other LLMs can fill volume gaps, yet it risks inheriting the very biases it was meant to fix.

The gold standard remains human-curated, native-speaker data—carefully collected, transcribed, annotated, and validated. That’s where specialized expertise becomes non-negotiable. Generic web crawls or off-the-shelf datasets simply cannot deliver the cultural depth required for models that must serve real users in real contexts.

This is exactly where Artlangs Translation has built its edge.

For years we have stood in conference halls and boardrooms providing simultaneous interpreting across more than 230 languages. That work taught us something fundamental: accurate communication is never just about words. It’s about tone, intent, cultural reference, and unspoken expectations. When a Japanese executive pauses for emphasis or a Brazilian speaker uses a regional idiom, the interpreter must convey the full meaning—not a literal translation.

We carry that same rigor into multilingual AI data collection for LLMs. Our teams of native linguists and subject-matter experts handle the full pipeline: sourcing authentic content from target-language media, transcribing spoken material (podcasts, webinars, meetings), subtitling and localizing short dramas and video assets, dubbing audiobooks and game dialogue, and performing meticulous data annotation and quality assurance.

Clients who previously relied on scraped or machine-translated datasets now see measurable lifts—better intent recognition in customer support bots, more natural generation in marketing tools, reduced hallucination rates in low-resource language queries. One technology partner needed reliable training data for 15 underrepresented Asian and European languages; our annotated corpus, drawn from real conference transcripts and localized entertainment content, helped their model close performance gaps that generic approaches had left untouched.

Practical steps for better multilingual data collection

Prioritize native over translated. Seek content originally created in the target language whenever possible. Our video localization and short-drama subtitle projects routinely surface fresh, context-rich material that web crawls miss.

Layer human annotation early. Automated tools can pre-filter, but native speakers must validate labels for sentiment, named entities, cultural sensitivity, and domain accuracy. This is core to our multilingual data annotation and transcription services.

Diversify sources beyond the web. Conference recordings, industry reports, localized games, and audiobooks provide structured, high-signal data that reflects professional and everyday usage.

Measure and iterate on bias. Track performance not just by aggregate benchmarks but by language-specific metrics. The gaps become obvious quickly—and fixable when you control the data pipeline.

Scale ethically. Engage communities, respect privacy, and compensate contributors fairly. Long-term trust builds better data than shortcuts ever could.

The models that will dominate the next wave of AI won’t be the ones with the most parameters. They’ll be the ones trained on data that truly represents the people using them.

At Artlangs Translation, we’ve spent more than a decade turning linguistic expertise into scalable, production-ready datasets. Whether you need conference-derived parallel data, game-localized dialogue corpora, short-drama dubbing aligned with scripts, or end-to-end annotation for 50+ languages, our teams deliver the quality that turns good models into globally capable ones.

If your LLM roadmap includes markets where English is not the default—or if current performance in non-English languages is holding you back—let’s talk. Drop a comment, send a message, or visit our profile to schedule a quick call. The world’s languages are waiting for AI that finally speaks them fluently.

What multilingual data challenge are you tackling right now? I’d love to hear in the comments.