Translating a detailed AI computing power report isn’t just swapping words—it’s making sure every exaflop, benchmark score, and growth projection lands exactly right in another language. These documents are packed with hyper-specific metrics: H100-equivalent clusters, power draw in megawatts, efficiency curves, and forecasts that can shift billion-dollar investment decisions. Miss a decimal point or soften a technical term like “mixed-precision FLOPS,” and suddenly a country’s leadership position looks weaker, or a cost-saving trend gets overstated. For executives, analysts, and government teams who rely on these reports to steer strategy, even small slips add up fast.

The numbers driving today’s AI race tell the story clearly. The most recent comprehensive data, pulled from the 2025 Stanford AI Index and TRG Datacenters’ global infrastructure analysis (still the benchmark as we head into 2026), shows training compute for frontier models doubling roughly every five months. At the same time, hardware costs are dropping about 30 percent per year while energy efficiency improves around 40 percent annually. These aren’t theoretical curves—they’re the reason massive data-center builds are popping up from the Middle East to East Asia.

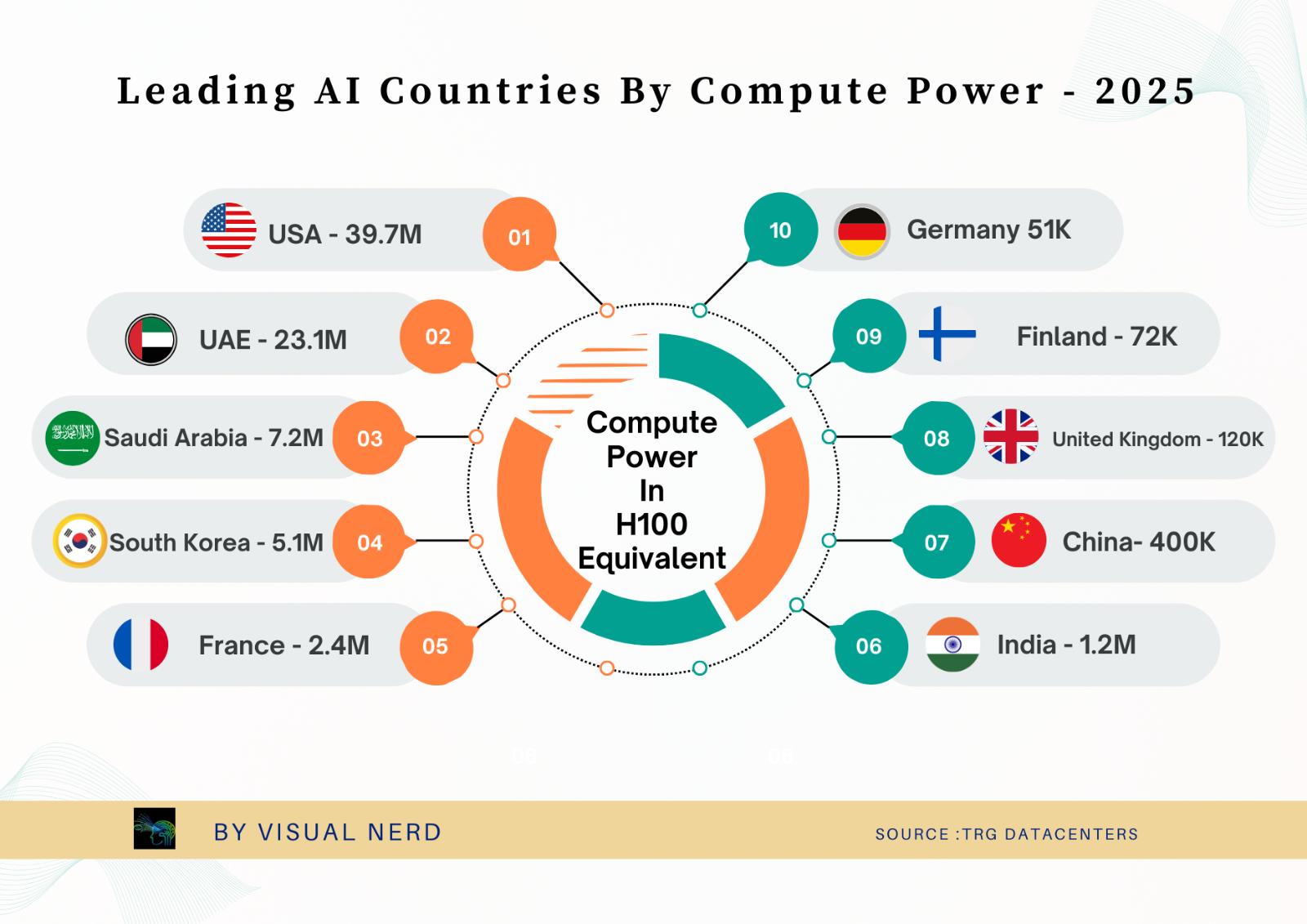

What the rankings reveal is even more striking. When you measure total AI compute capacity in standardized NVIDIA H100 equivalents, the picture is sharply concentrated.

The United States sits far ahead with 39.7 million H100 equivalents, followed by the United Arab Emirates at 23.1 million. Saudi Arabia, South Korea, and France round out the top five. China, despite leading in sheer number of clusters (230), shows a much lower effective compute total at around 400,000 H100 equivalents—highlighting how raw cluster counts don’t always equal usable power when you factor in architecture and utilization.

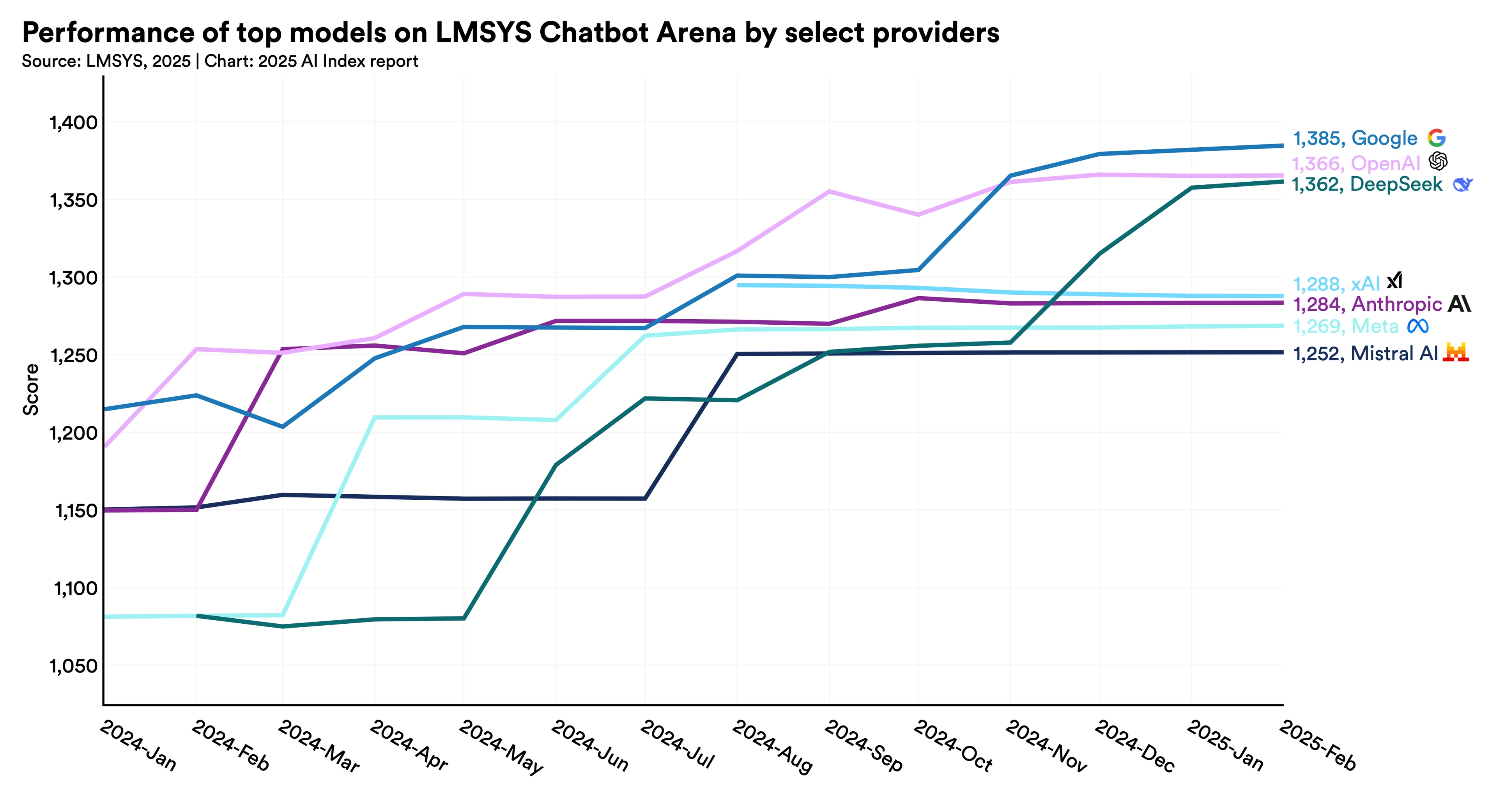

That same Stanford report also tracks how quickly the frontier is tightening. Smaller, more efficient models are closing the gap on the giants at an impressive clip. Take a look at how leading providers’ top models have climbed the LMSYS Chatbot Arena leaderboard over the past year:

You can see the lines converging—Google, OpenAI, and DeepSeek all pushing past 1,360 points while others like Anthropic and Meta hold strong just behind. What used to be a wide performance spread is now a tight pack, which changes how companies and countries think about where to invest next.

The real headache in translation comes with the forward-looking sections. These reports don’t just list today’s numbers; they project inference costs falling over 280-fold since late 2022, open-weight models narrowing the gap to under 2 percent on key benchmarks, and training runs heading into fresh exaflop territory. Every prediction comes with footnotes, confidence intervals, and scenario assumptions. A generic translator might render “compute demand will double within 18 months” cleanly, but the nuance around methodology or regional variables can disappear—leaving readers with a distorted view of risk and opportunity.

Energy metrics add another layer of complexity. Carbon-equivalent emissions, cooling water usage, and grid-impact forecasts appear throughout. Get the units or context wrong in a market with strict ESG rules, and the whole report can mislead on sustainability claims.

In the end, the difference between a good translation and a great one is whether decision-makers in Tokyo, Riyadh, or Berlin can trust the data the same way an English reader does. That clarity lets teams move faster on infrastructure bets, policy papers, or competitive analysis without second-guessing the source material.

At Artlangs Translation, this is exactly the kind of high-precision work we’ve been handling for years. With fluency across more than 230 languages, our teams have built deep experience in technical translation, video localization, short-drama subtitle work, game localization, multilingual dubbing for dramas and audiobooks, plus detailed data annotation and transcription projects. That mix of technical depth and creative adaptation means we don’t just move numbers across borders—we make sure the strategic insights they carry stay sharp and actionable. When your next AI infrastructure report needs to inform a global audience, the data should cross languages without losing a single watt of its impact.