Anyone building AI models in 2026 already feels the shift: raw data volume is no longer the bottleneck. The real challenge is turning that data into training material that’s accurate, compliant, and actually useful across languages and domains. Teams that treat data annotation as an afterthought are watching their models hallucinate, bias amplify, or fail regulatory audits.

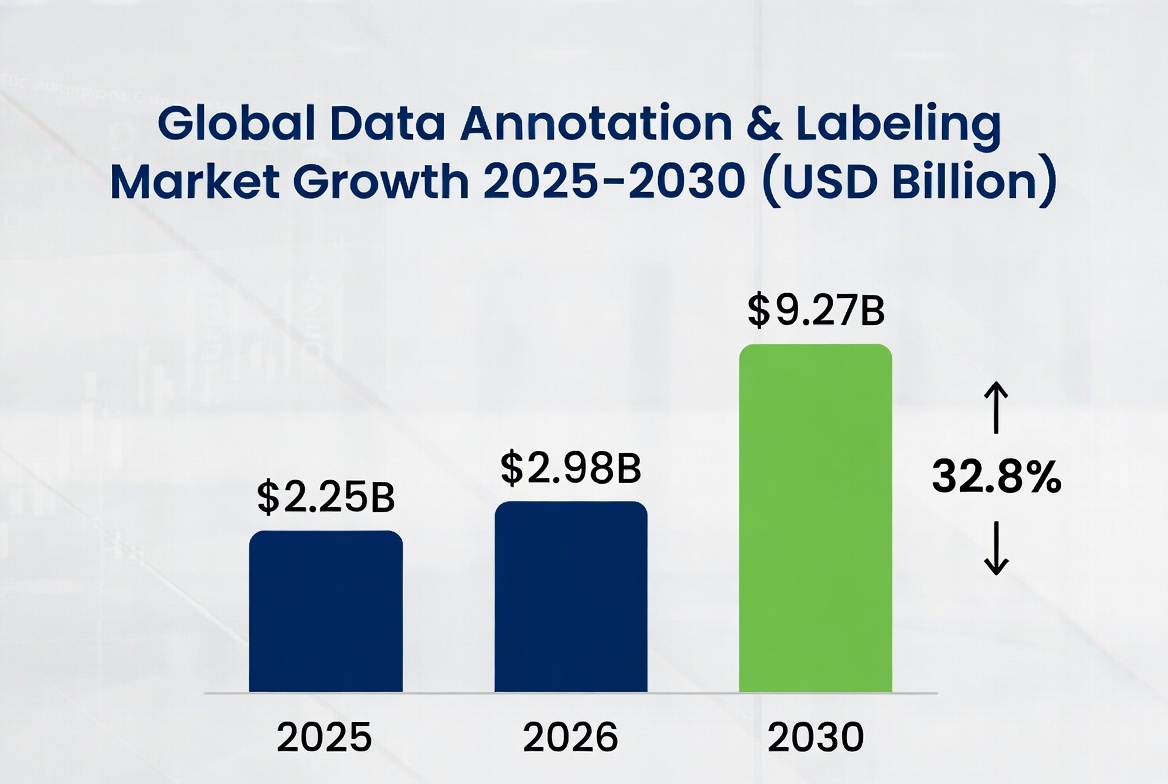

The numbers back it up. The global data annotation and labeling market sat at $2.25 billion in 2025 and is jumping to $2.98 billion this year, on track to hit $9.27 billion by 2030 at a 32.8% compound annual growth rate. That explosive expansion isn’t coming from more cheap labels—it’s driven by demand for higher-quality, multimodal, and regulation-ready datasets.

Here are the six shifts that are already reshaping how smart teams approach data annotation in 2026—and what they mean for anyone scaling AI right now.

1. Multimodal annotation moves from nice-to-have to non-negotiableModels now ingest text, images, video, audio, and 3D sensor data together. A single autonomous driving dataset might require synchronizing a driver’s spoken command with eye gaze, road markings, and LiDAR points in the exact same frame. Generic tools that handle one modality at a time simply break. Teams that master temporal and relational consistency across streams gain a massive edge in real-world performance.

2. Synthetic data gets paired with mandatory human anchorsPure synthetic data sounded like a shortcut, but over-reliance has triggered “model collapse” in multiple documented cases—AI starts recycling its own errors and loses touch with reality. The winning formula in 2026: generate synthetic volume for rare edge cases, then let expert humans validate and enrich a carefully chosen subset. I’ve watched clients cut labeling costs by 40% while actually improving model robustness once they stopped treating synthetic as a full replacement.

3. Domain experts replace crowd-sourced generalistsCrowd workers were fine for simple image tags in 2022. Today, medical AI needs radiologists spotting subtle tissue variations, legal LLMs require attorneys labeling reasoning chains, and industrial predictive maintenance demands engineers who understand machinery failure modes. The accuracy gap is no longer debatable—domain expertise has become the baseline for any high-stakes application.

4. Regulatory pressure forces traceable human oversightThe EU AI Act’s Article 14 deadline hits in August 2026 for high-risk systems: real people must be able to oversee and intervene. That means every label needs documented provenance—who annotated it, their qualifications, how bias was checked, and the full audit trail. Teams ignoring this are already seeing projects stalled in compliance reviews.

5. AI-assisted automation finally pairs with strong human-in-the-loopPre-annotation tools now handle 80% of repetitive work with decent accuracy, but the remaining 20%—the ambiguous, contextual, or safety-critical cases—still demands human judgment. The smartest pipelines use automation as a force multiplier while keeping humans firmly in control for quality gates and ethical decisions.

6. Quality metrics and bias detection become standard operating procedureInter-annotator agreement scores (Cohen’s Kappa, Fleiss’ Kappa), consensus protocols, and ISO/IEC 5259 compliance checks are no longer optional extras. Shift-left quality assurance—catching instruction problems in pilot phases instead of after thousands of labels—has become table stakes. Teams that measure reliability early avoid the expensive rework cycles that used to eat 30–40% of project budgets.

These aren’t distant predictions. Companies I’ve worked with that started adapting in late 2025 are already seeing faster model deployment, fewer audit findings, and measurably better performance in production.

What enterprises should do right now to stay aheadStart by auditing your current annotation pipeline against these six shifts: map your multimodal needs, test hybrid synthetic-human workflows on one pilot dataset, identify where domain experts would move the needle, and build traceability into every process. Budget for quality over quantity, and treat data annotation as core infrastructure rather than a line-item expense. The organizations that embed these practices early will turn regulatory pressure into a competitive moat instead of a roadblock.

When the pressure is on to deliver accurate, compliant, and multilingual datasets at scale, the right partner makes all the difference. Artlangs Translation has been quietly leading the way for years. Proficient across more than 230 languages and with deep specialization in translation services, video localization, short drama subtitle localization, game localization for short dramas, multi-language audiobook dubbing, and especially multilingual data annotation with transcription, they bring the same precision and real-world experience to every labeling project. Their track record of successful cases gives teams the confidence that their data pipelines will meet 2026’s toughest demands—without compromise and with full audit-ready traceability.