Industry vertical large language models are reshaping everything from medical diagnostics to financial forecasting and legal research. Yet the dirty secret behind many disappointing deployments is simple: the training data. When teams grab whatever they can from the web without a second thought, the models inherit every flaw—repetitive junk, hidden biases, privacy leaks, and toxic content. The outcome is predictable. Confident hallucinations, skewed recommendations, and outputs that no regulator or executive would trust.

The good news? High-quality, domain-specific data collection and cleaning can turn this around. Done right, it delivers models that actually perform in specialized environments. The key is navigating the new copyright realities while treating cleaning as a core performance lever, not an afterthought.

The Tightening Grip of Copyright Law on Data Collection

Copyright enforcement around AI training data has shifted dramatically. High-profile cases have made clear that scraping without regard for ownership carries real risk. The New York Times lawsuit against OpenAI and Microsoft, filed in late 2023 and still advancing through the courts in 2025, stands out. A federal judge rejected early dismissal attempts, allowing core infringement claims to proceed and even ordering the release of millions of ChatGPT conversation logs for discovery. Similar actions from other publishers underscore the point: mass ingestion of copyrighted material for commercial model training is under intense scrutiny.

This doesn’t mean web data is off-limits. Publicly accessible information can still be collected legally in many jurisdictions, but the rules of engagement have changed. Respecting a site’s robots.txt file, even though it is not always legally binding in the US, signals good faith and helps avoid technical blocks or future disputes. Rate-limiting requests, using proper user-agent strings, and steering clear of login-protected or paywalled content further reduce exposure. Terms of service that explicitly prohibit scraping for AI training should be honored where they apply.

Smarter operators are moving beyond raw scraping entirely. They combine limited, compliant crawls of public domains with licensed datasets, government open-data portals, industry consortia partnerships, and carefully curated proprietary sources. For vertical applications—healthcare notes under HIPAA constraints or financial filings—the emphasis is on permissioned access and documented provenance. This approach not only lowers legal risk but also produces cleaner starting material that requires far less remediation later.

Building Domain-Specific Datasets Responsibly

Vertical LLMs thrive on relevance, not volume. A finance model trained on general web text will struggle with regulatory nuances; a legal model fed generic news will miss jurisdiction-specific precedents. Responsible dataset construction starts with clear domain boundaries. Identify the exact subfields—clinical trial reports for pharma, SEC filings for investment banking, case law databases for litigation tech—and source accordingly.

Practical tactics that work today include:

Prioritizing structured public repositories and APIs over unstructured web pages.

Engaging subject-matter experts to validate and annotate samples early.

Supplementing with licensed commercial corpora when available.

Exploring ethical synthetic data generation for edge cases, always cross-checked against real examples.

The goal is a dataset where every token adds genuine signal rather than noise. Quantity still matters, but only after quality is locked in.

Unlocking Model Potential Through Rigorous Data Cleaning

Even the best-sourced data needs polishing. Three processes—deduplication, desensitization, and detoxification—deliver outsized returns in vertical performance.

Deduplication removes exact and near-duplicate entries that otherwise waste compute and encourage memorization. Studies show that a single repeated 61-word sequence can appear tens of thousands of times in raw corpora, leading to verbatim regurgitation and train-test leakage. Cleaning this out typically shrinks datasets by 5–19% while preserving or improving accuracy and cutting training costs.

Desensitization strips personally identifiable information and sensitive details. In healthcare or finance, this step is non-negotiable for regulatory compliance. Automated PII detectors followed by human review ensure models learn patterns without ever seeing protected data.

Detoxification filters toxic language, hate speech, and harmful stereotypes. Left unchecked, these elements embed biases that surface in production as unfair or unsafe outputs. Modern classifiers and human-in-the-loop validation keep the model aligned with ethical standards.

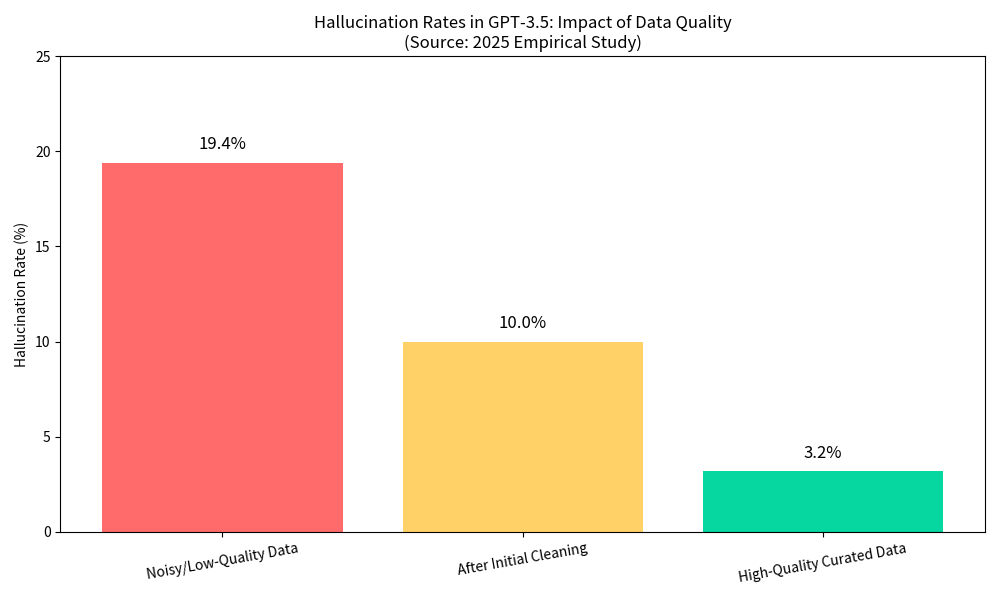

The payoff is measurable. One 2025 empirical study on GPT-3.5 demonstrated the difference starkly: noisy, low-quality data produced a 19.4% hallucination rate, while carefully curated high-quality data dropped it to just 3.2%. Similar patterns appear across domains—accuracy gains of up to 25 percentage points have been documented when duplication, noise, and bias are systematically removed.

This bar chart illustrates the transformation. Models trained on uncleaned data start with high error rates. Structured cleaning slashes hallucinations dramatically, delivering the reliability vertical applications demand.

A Practical Pipeline That Delivers

Successful teams treat data preparation as an iterative pipeline rather than a one-off task:

Scope and source legally.

Run automated deduplication (exact, fuzzy, and semantic).

Apply privacy filters and domain-specific validation rules.

Screen for toxicity and bias with layered checks.

Sample and have domain experts review subsets.

Measure quality with benchmarks tailored to the use case—factual consistency, domain accuracy, safety metrics.

Monitor and refresh periodically as regulations and knowledge evolve.

Tools ranging from open-source libraries to enterprise platforms make each step scalable. The common thread is documentation: every decision, filter, and annotation must be traceable for audit and compliance.

Why This Matters Now

Vertical LLMs are moving from pilot to production. Boards, regulators, and end users expect accuracy, fairness, and legal soundness. Models built on garbage data will continue hallucinating and eroding confidence. Those fed high-quality, responsibly collected and meticulously cleaned data become genuine competitive advantages—faster, safer, and more trustworthy.

For organizations scaling these models across borders or languages, the final piece often involves expert multilingual handling. Collaborating with specialists like Artlangs Translation—proficient in over 230 languages and focused for years on translation services, video localization, short drama subtitle localization, game localization short dramas and audiobooks multilingual dubbing, plus extensive multilingual data annotation and transcription—brings proven expertise and excellent case studies to the table. Their experience ensures domain datasets are not only clean but culturally precise and globally compliant, turning international expansion from a headache into a strength.

The AI beast is hungry. Feed it right, and it will reward you for years to come.